Kubernetes nodes – the machines responsible for running your container workloads – can come in a number of shapes, sizes, and configurations. One common deployment pattern, however, is a lack of in-transit encryption between them.

Another common deployment pattern? Lack of TLS support on the container workloads themselves. After all, who wants to set up and manage a PKI (Public-Key Infrastructure) and a private CA (Certificate Authority) for tens or hundreds of microservices, and get the certificates to be trusted by all workloads? I don’t know about you, but that doesn’t sound like a lot of fun to me.

Why should I care about in-transit encryption and TLS for container workloads?

Historically, container-based workloads use cleartext HTTP for traffic, and let the load balancers fronting them deal with TLS. This works well, but also leaves the traffic between nodes themselves or with the load balancer feeling a little vulnerable to prying eyes.

Before we go much further, I’d like to lay out why we’re even talking about this. In the cloud-first world, there’s a feeling of safety found within the walls of your AWS VPC. It’s your virtual private network, shared with nobody else (it says ‘private’ right in the name!), in the safe haven that is your chosen AWS region.

That said, AWS VPC is entirely a software-based construct. Just like VLANs, VPC traffic is simply a logical separation. On the wire, you and thousands of other AWS customers share electrical signals, passing through networking equipment that may be the target of sophisticated attackers. Using TLS between your workloads will not only secure the traffic, but help you authenticate different endpoints. And if you, for example, provide services to the U.S. Federal Government, you may care a great deal, as the data being transmitted may be extremely sensitive.

While we’re on the topic of government, it’s worth mentioning that security controls within NIST 800-53 are the security bible of U.S. Federal, State, and Local Governments, as well as many other government-adjacent organizations. These entities not only use NIST 800-53 for themselves; they also often require vendors to adhere to it as well (FedRAMP, as the primary example). NIST 800-53 SC-8(1) specifically mandates encryption between systems, and enforcement of this control upon cloud-based vendors is increasing significantly.

What options are there to manage TLS between workloads?

In the Kubernetes ecosystem, there are some options for layering on encryption in-transit by employing a Service Mesh, which describes how network traffic can go from one service to another. The most popular means by far is Istio, an ‘everything and the kitchen sink’ solution for controlling container traffic behavior. While it’s got the name recognition in this space, Istio is also notoriously complex, and running it across a large production infrastructure can lead to meaningful increases in resource consumption, unexpected problems, and a need for custom-engineered solutions.

It is, however, quite performant.

Are there other options? Indeed there are! However since I haven’t yet introduced the AWS Nitro architecture yet, you’ve probably already assumed I’m going to offer them up and immediately shoot them down. You would be right.

Another choice for in-transit encryption is Cilium. This is a really cool project that makes encryption of inter-node traffic much more straightforward than managing a production roll-out of Istio. That said, it also causes a nearly 10x reduction in effective bandwidth in controlled benchmarking tests. Ouch.

Finally, it's worth mentioning that all three of the aforementioned solutions do not generate key material with a FIPS-validated cryptographic module. That could be an entire post on its own, but in short: FIPS-validated cryptography is a big box to check for government customers and very large enterprises. Not having it can be problematic if you wish to store or process data for these organizations.

Having taken a brief look at the Service Mesh approach, we’ll focus the remainder of this post on the AWS Nitro Architecture and its implementation in a Kubernetes environment.

AWS Nitro Architecture

In November of 2017, AWS announced the Nitro System: a four-year long effort at offloading storage, networking, and management tasks to a custom-built board that is physically attached to the host. Over the next year and a half, AWS quietly worked to build encryption support between certain Nitro-enabled instance types, and provided a detailed unveiling during the inaugural AWS re:Inforce conference in June of 2019.

Communication between these special Nitro instance classes, when the instances are located within the same VPC, is fully encrypted using AES at line-rate speeds (up to 100 GB/s). By utilizing these encryption-capable Nitro instance classes for our Kubernetes nodes, we get inter-node traffic encryption “for free”, without layering on new software or having to compromise on performance.

As of publication, the following AWS EC2 instance types support this feature:

C5n, G4, I3en, M5dn, M5n, P3dn, R5dn, and R5n

Be sure to check the AWS documentation for the latest information on supported instance types (under “Encryption in transit”).

In addition to running nodes on specific instance types, you’ll also need to ensure that you’re running a modern version of the AWS VPC CNI.

Implementation Considerations

My statement of “for free” above is in quotes for good reason: the encryption-capable Nitro instance classes may come at a higher price point than the instance you use today. Depending on the profile of your workload, the newer instance types may offer enough of a boost to workload performance that you end up running fewer nodes, making the difference a wash.

Take care to understand which instance types may be the best fit for your workloads, and don’t be afraid to experiment.

It’s important to remember that utilizing a Service Mesh also comes with a cost, should you choose that route instead. Istio is performant, but utilizes sidecars to encrypt traffic, meaning increased resource utilization. Increased resource utilization equals increased infrastructure cost. Add in engineering time, and you may end up down an expensive rabbit hole. Spend some time to research, do proof-of-concepts, and most importantly: measure everything!

I previously mentioned the importance of generating key material with a FIPS-validated cryptographic module if you serve government or very large enterprises. A natural follow-up question would be, “So, does Nitro generate keys with a FIPS-validated cryptographic module?” The answer seems to be… not exactly. AWS has been pretty coy with the exact details in public (for good reason, some would say ), but what we do know is that AWS Key Management Service – which is FIPS-validated – first generates seeds.

These seeds are then sent to the Nitro controllers, and are subsequently used for the actual key generation. At a minimum, AWS is a FedRAMP-authorized IaaS provider, so it’s likely this component has received a strong amount of scrutiny and was found to be sufficiently-built. But a FIPS-validated cryptographic module, it is not.

Ensuring Compliance

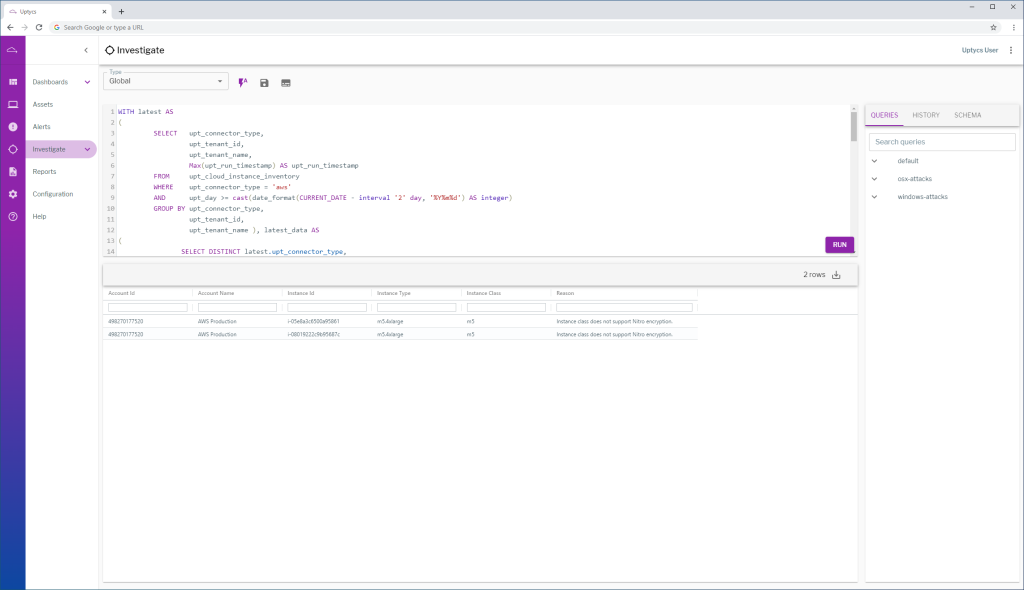

Now that we have a solution for encrypting our Kubernetes node traffic, let’s write a query in Uptycs that allows us to monitor for EC2 resources that are not utilizing encryption-capable Nitro instance classes.

WITH latest AS

(

SELECT upt_connector_type,

upt_tenant_id,

upt_tenant_name,

Max(upt_run_timestamp) AS upt_run_timestamp

FROM upt_cloud_instance_inventory

WHERE upt_connector_type = 'aws'

AND upt_day >= cast(date_format(CURRENT_DATE - interval '2' day, '%Y%m%d') AS integer)

GROUP BY upt_connector_type,

upt_tenant_id,

upt_tenant_name ), latest_data AS

(

SELECT DISTINCT latest.upt_connector_type,

latest.upt_tenant_id,

latest.upt_tenant_name,

instance_id,

instance_type,

latest.upt_run_timestamp

FROM upt_cloud_instance_inventory u

JOIN latest

ON (

u.upt_connector_type = latest.upt_connector_type

AND u.upt_tenant_id = latest.upt_tenant_id

AND u.upt_tenant_name = latest.upt_tenant_name

AND u.upt_run_timestamp = latest.upt_run_timestamp )

WHERE instance_type IS NOT NULL

AND latest.upt_connector_type = 'aws'

AND upt_day >= cast(date_format(CURRENT_DATE - interval '2' day, '%Y%m%d') AS integer)

GROUP BY latest.upt_connector_type,

latest.upt_tenant_id,

latest.upt_tenant_name,

instance_id,

instance_type,

latest.upt_run_timestamp ), latest_data_final AS

(

SELECT DISTINCT trim(rtrim(instance_type, replace(instance_type, '.', '' )),'.') instance_class,

latest.upt_connector_type,

latest.upt_tenant_id,

latest.upt_tenant_name,

instance_id,

instance_type,

latest.upt_run_timestamp

FROM latest_data u

FULL JOIN latest

ON (

u.upt_connector_type = latest.upt_connector_type

AND u.upt_tenant_id = latest.upt_tenant_id

AND u.upt_tenant_name = latest.upt_tenant_name

AND u.upt_run_timestamp = latest.upt_run_timestamp ) )

SELECT upt_tenant_id AS "Account Id",

upt_tenant_name AS "Account Name",

instance_id AS "Instance Id",

instance_type AS "Instance Type",

instance_class AS "Instance Class",

'Instance class does not support Nitro encryption.' AS "Reason"

FROM latest_data_final

WHERE instance_id IS NOT NULL

AND instance_class NOT IN ('c5n',

'g4',

'i3en',

'm5dn',

'm5n',

'p3dn',

'r5dn',

'r5n')

Viola! We get our results back and notice that there are two EC2 instances running outside of our allowed instance classes.

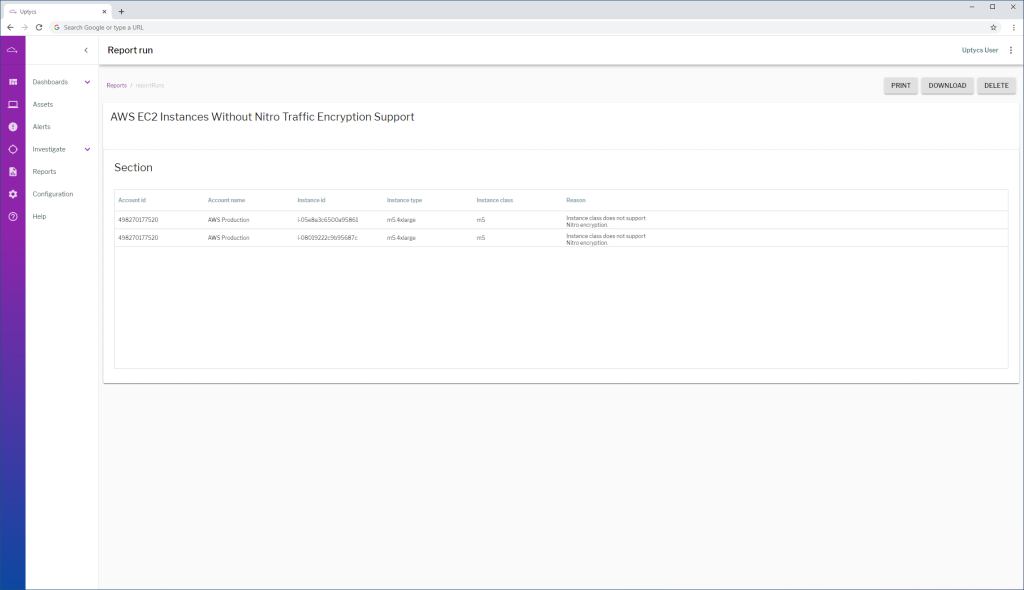

Now that we have a query written, let’s create a scheduled report and add a query section. Copy the query in and save.

You’re set! From here, Uptycs will monitor your EC2 instances automatically and notify you if any are not utilizing the encryption-capable Nitro instance classes.

If you'd like to get a closer look at Uptycs and our new integration with AWS, reach out for a tailored demo or submit a request to get a trial environment to explore at your own pace!

.png)

.png)